LMQL

About LMQL

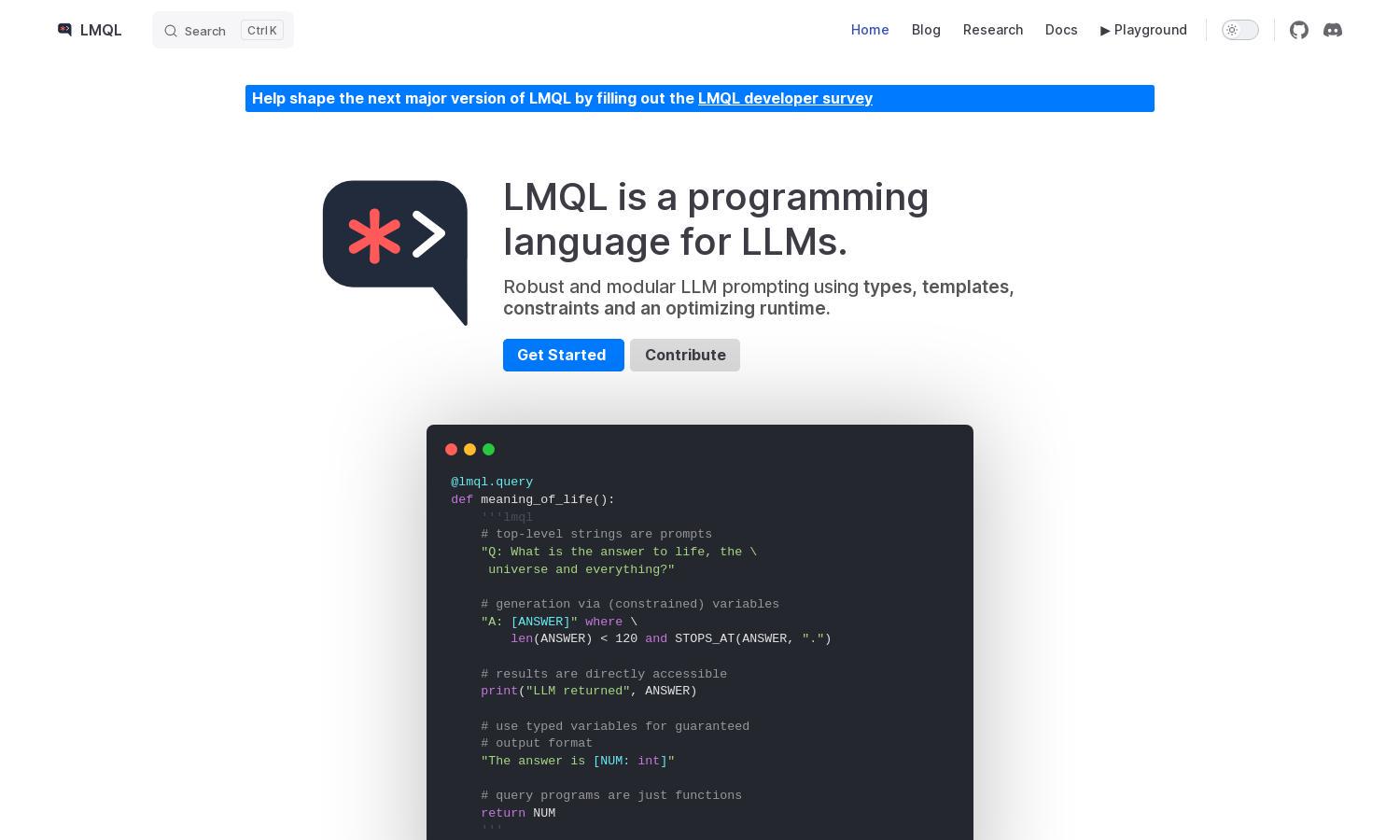

LMQL is an innovative programming language focused on enhancing Language Model interactions. It enables developers to create complex queries using modules, types, and templates. Its unique nested query feature allows for procedural programming in LLM prompting, making it an invaluable tool for AI developers and researchers.

LMQL offers flexible pricing plans tailored for developers, with a free tier for basic users and premium subscriptions unlocking advanced features. Users can benefit from discounts when committing to long-term plans, ensuring access to cutting-edge tools for efficient LLM deployment and usage.

LMQL features an intuitive user interface designed for seamless navigation and ease of use. The layout enhances user experience with straightforward access to key features like nested queries and LLM interactions, making it easy for both beginner and advanced users to build and optimize their language models.

How LMQL works

To get started with LMQL, users sign up and access a user-friendly dashboard. They can effortlessly create LLM queries using the unique programming language syntax. Features like nested queries and variable types enable deep control over prompt generation. Users can easily switch between different backends, maximizing flexibility in their projects.

Key Features for LMQL

Nested Queries

LMQL's nested queries allow for procedural programming within prompts, enabling better modularization. This unique feature simplifies the reuse of prompt components, empowering developers to create more sophisticated and efficient LLM interactions, ultimately enhancing the overall functionality of LMQL.

Modular LLM Prompting

The modular LLM prompting feature of LMQL empowers developers to create dynamic, tailored interactions. By using types, constraints, and templates, users can design prompts that are both robust and flexible, allowing for a higher degree of customization in their LLM applications.

Optimizing Runtime

LMQL's optimizing runtime enhances performance by ensuring efficient execution of queries. This key feature helps reduce response times and improves the usability of LLM applications, allowing developers to create fast, reliable AI solutions tailored to their specific needs.

You may also like: