LLMWise vs ltx2.site

Side-by-side comparison to help you choose the right tool.

LLMWise

LLMWise offers a single API to access and compare 62 AI models, optimizing prompts with pay-per-use pricing.

Last updated: February 26, 2026

ltx2.site

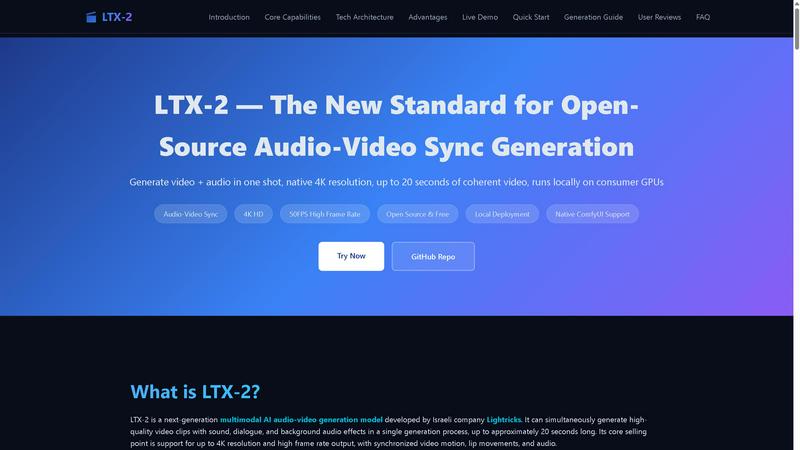

LTX-2 is an open-source AI that generates synchronized 4K video and audio locally in one step.

Last updated: February 28, 2026

Visual Comparison

LLMWise

ltx2.site

Feature Comparison

LLMWise

Smart Routing

Smart routing is a pivotal feature of LLMWise that intelligently directs each prompt to the most appropriate LLM. For instance, coding-related requests can be sent to GPT, while creative writing tasks may be better suited for Claude. This dynamic selection process optimizes performance and accuracy, allowing users to achieve the best results based on the nature of their inquiries.

Compare & Blend

The Compare & Blend feature enables users to run prompts across different models simultaneously. Users can analyze responses side-by-side to determine which model performs best for their specific needs. The blending capability further enhances output quality by synthesizing the most effective parts of each model's response into a single, cohesive answer, thus elevating the overall quality.

Circuit-Breaker Failover

LLMWise ensures resilience through its circuit-breaker failover mechanism. In the event that a primary model provider experiences downtime, LLMWise automatically reroutes requests to backup models. This feature guarantees that applications remain operational, preventing disruptions and maintaining service continuity even in unpredictable circumstances.

Test & Optimize

LLMWise offers comprehensive testing and optimization tools that allow developers to benchmark model performance, conduct batch tests, and implement optimization policies tailored for speed, cost, or reliability. Automated regression checks ensure that updates do not negatively impact existing functionalities, providing peace of mind to developers who rely on stable AI integrations.

ltx2.site

Unified Audio-Video Generation

LTX-2's core capability is its one-shot generation of synchronized video and audio within a single diffusion process. This eliminates the need for separate audio dubbing, post-production compositing, and tedious timeline alignment. The model is trained to understand physical correspondences, ensuring character lip movements align with speech, actions like door openings are accompanied by matching sound effects, and background music rhythm coordinates with on-screen motion. This integrated approach delivers a complete, coherent audiovisual clip directly from the generation.

Professional 4K Resolution & High Frame Rate

The model is architected to support output at professional cinematic standards, specifically up to 4096x2160 (4K) resolution and approximately 50 frames per second. This high-fidelity output is sufficient for short films and commercial-grade content, providing outstanding detail and lighting performance. The native high-quality generation means the output can be used directly in professional editing pipelines without requiring additional upscaling or frame interpolation steps, a significant advantage among open-source models.

Local Deployment on Consumer GPUs

A major technical advantage of LTX-2 is its deep optimization for local deployment on mainstream NVIDIA consumer graphics cards with high VRAM. The model's architecture offers inference efficiency several times higher than previous generations and reduces computational cost by approximately 50%. With support for low-precision weights (NVFP4/NVFP8), generating 4K video locally becomes feasible, granting users full data privacy, workflow control, and freedom from cloud service dependencies and recurring subscription fees.

Native ComfyUI Integration & Flexible Control

LTX-2 offers advanced users a highly flexible and powerful workflow through its native integration with ComfyUI, a node-based visual programming interface. This allows for intricate pipeline building, customization, and experimentation. The model supports multiple control methods including text prompts, image inputs, and sketches, and provides configurable quality and speed modes (Fast, Pro, Ultra) to allow users to perfectly balance generation quality against processing time for their specific project needs.

Use Cases

LLMWise

Multi-Model AI Development

Developers can leverage LLMWise to streamline the process of developing AI applications that require different capabilities. For instance, a project might need sophisticated language understanding for chatbots, high-quality translation for internationalization, and creative writing for marketing content. LLMWise allows developers to access the best tool for each job without juggling multiple subscriptions.

Cost-Effective Prototyping

Businesses can utilize the 30 free models available through LLMWise to prototype and test various AI solutions without incurring initial costs. This enables teams to experiment with different models and determine the best fit for their applications before committing to premium services, significantly lowering the barrier to entry for AI adoption.

Enhanced AI Quality Assurance

Quality assurance teams can use the Compare mode to evaluate how different models respond to the same input. This process helps identify edge cases and ensures that the selected model performs reliably across a range of scenarios, ultimately leading to more robust and dependable AI applications.

Flexible Integration for Startups

Startups can benefit from LLMWise's BYOK (Bring Your Own Keys) feature, allowing them to integrate their existing API keys for various models. This flexibility not only reduces costs by eliminating the need for multiple subscriptions but also provides access to failover routing, ensuring that their applications remain resilient while managing expenses effectively.

ltx2.site

Prototyping for Film and Animation

Independent filmmakers and animation studios can use LTX-2 to rapidly prototype scenes, generate concept clips, and visualize storyboards with synchronized sound. The ability to produce up to 20 seconds of coherent, high-frame-rate 4K video with matching audio allows for the creation of compelling pitch materials and pre-visualization assets without the massive time and resource investment of traditional production methods, accelerating the creative development cycle.

AI Research and Model Development

AI researchers and developers working on multimodal systems can utilize the open-source LTX-2 model as a state-of-the-art baseline or a component for further experimentation. Its publicly available architecture and code allow for deep study into joint audio-video diffusion processes, fine-tuning on custom datasets, and the development of new control mechanisms or extensions, pushing forward the entire field of generative multimedia AI.

Dynamic Content for Social Media & Marketing

Digital marketers and social media content creators can leverage LTX-2 to produce unique, eye-catching short-form video content with perfect audio sync. This is ideal for creating engaging advertisements, product showcases, or branded storytelling clips where high production value is key. The local operation ensures brand assets and prompts remain confidential, and the speed enables rapid iteration on content ideas.

Game Development and Interactive Media

Game developers can integrate LTX-2 into their workflow to dynamically generate in-game cutscenes, character dialogue sequences, or environmental ambiance videos with matching sound effects. The model's ability to sync actions with sounds (like footsteps or door creaks) and dialogue with lip movements makes it a powerful tool for creating immersive, responsive narrative elements, especially for indie developers with limited voice-acting and animation budgets.

Overview

About LLMWise

LLMWise is an innovative API solution designed to simplify the integration and utilization of multiple large language models (LLMs) from leading AI providers. By consolidating access to models from OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek, LLMWise provides a unified interface that eliminates the need for developers to manage numerous subscriptions and APIs. The core functionality of LLMWise revolves around intelligent routing, which automatically selects the most suitable model for each specific task, whether it is coding, creative writing, or translation. This seamless orchestration allows developers to focus on their applications without worrying about the intricacies of individual API implementations. LLMWise is particularly valuable for developers and businesses seeking to leverage the best AI capabilities available, with flexible payment options that adapt to usage, ensuring cost efficiency and scalability.

About ltx2.site

LTX-2, accessible via ltx2.site, is a groundbreaking open-source multimodal AI model developed by Lightricks, representing a significant leap forward in synchronized audio-video generation. This next-generation technology is engineered to produce high-quality, cinematic video clips complete with perfectly synchronized audio in a single, unified generation process. It is specifically designed for AI researchers, developers, digital artists, and professional content creators who require professional-grade output without the constraints of cloud-based subscriptions or proprietary software. The core value proposition of LTX-2 lies in its ability to generate up to 20 seconds of coherent 4K resolution video at approximately 50 frames per second, with audio elements such as dialogue, sound effects, and background music aligned precisely with on-screen actions. A key differentiator is its support for local deployment on consumer-grade NVIDIA GPUs, granting users full control over their workflow, data, and computational resources. Furthermore, its native integration with ComfyUI provides a flexible and powerful node-based interface for advanced customization and pipeline building, making it an indispensable tool for anyone pushing the boundaries of AI-generated multimedia and seeking a viable, high-quality open-source alternative.

Frequently Asked Questions

LLMWise FAQ

How does LLMWise optimize model selection?

LLMWise employs an intelligent routing mechanism that analyzes the nature of each prompt and directs it to the most suitable LLM. This ensures that users receive the best possible response based on the specific capabilities of each model.

Can I use my existing API keys with LLMWise?

Yes, LLMWise supports the Bring Your Own Keys (BYOK) feature, allowing you to integrate your existing API keys from different providers. This flexibility enables you to take advantage of failover routing while managing costs effectively.

What happens if a model provider goes down?

LLMWise has a circuit-breaker failover mechanism that automatically reroutes requests to backup models when a primary provider is unavailable. This ensures that your applications continue to function without interruption.

Are there any subscription fees associated with LLMWise?

LLMWise operates on a pay-as-you-go model, which means you only pay for what you use with no monthly subscription fees. New users receive 20 trial credits that never expire, and there are 30 models available at zero charge for ongoing use.

ltx2.site FAQ

What hardware is required to run LTX-2 locally?

LTX-2 is optimized for local deployment on consumer-grade NVIDIA GPUs. The primary requirement is a graphics card with sufficient VRAM (Video RAM). For generating high-quality 4K video, a high-VRAM GPU is recommended. The model's efficiency improvements and support for low-precision weights (like NVFP4/NVFP8) make it feasible to run on capable consumer hardware, significantly reducing the barrier to entry for professional-grade local audio-video generation compared to previous models.

How does LTX-2 achieve synchronization between audio and video?

LTX-2 uses a multimodal diffusion architecture that jointly models three dimensions: temporal (video motion between frames), spatial (visual content per frame), and acoustic (audio waveforms). During its training on vast datasets, the model learns the physical and semantic correspondences between actions and sounds. This allows it to generate, in a single cohesive process, video where elements like lip movements are temporally aligned with generated speech waveforms, and on-screen actions are paired with appropriate sound effects.

What is the maximum output length and quality?

A single generation with LTX-2 can produce up to approximately 20 seconds of continuous, coherent audio-video content. In terms of quality, the model officially supports output resolutions up to 4096x2160 (4K) and frame rates around 50 FPS. This emphasis on coherence reduces visual flicker and structural collapse across frames, making the output suitable for narrative scenes and camera movements, rather than just short, disjointed animated clips.

Is LTX-2 completely free to use?

Yes, LTX-2 is an open-source project. The model weights, code, and architecture are publicly available, typically through its GitHub repository. This means there are no licensing fees or subscription costs to use the core technology. The only potential costs are the computational resources required to run it, namely the electricity and hardware (GPU), which you own and control when running the model locally on your own machine.

Alternatives

LLMWise Alternatives

LLMWise is a cutting-edge API designed to streamline access to various large language models (LLMs) including GPT, Claude, and Gemini among others. It belongs to the AI Assistants category, catering to developers who seek to leverage the best AI capabilities without the hassle of managing multiple providers. Users often seek alternatives due to factors such as varying pricing structures, feature sets, and specific platform requirements that may better suit their unique applications. When searching for alternatives, it is crucial to consider several key attributes. Look for options that offer intelligent routing to optimize model usage, ensure reliability through features like failover mechanisms, and provide flexibility in pricing, such as pay-per-use models. Additionally, assess the ease of integration and the ability to benchmark and optimize performance, ensuring that the chosen solution aligns with your development goals.

ltx2.site Alternatives

LTX-2, accessible via ltx2.site, is an open-source multimodal AI model for synchronized audio-video generation. It represents a significant advancement in the AI video creation category, producing high-quality 4K clips with aligned audio in a single, local process. Users may seek alternatives for various reasons, including different pricing models, the need for cloud-based accessibility, specific feature sets like longer generation times or different artistic styles, or simpler user interfaces that do not require technical deployment. When evaluating alternatives, key considerations include the core technology (text-to-video, image-to-video), output quality (resolution, frame rate), audio synchronization capabilities, deployment method (cloud vs. local), cost structure, and the required level of technical expertise for operation and customization.