OGimagen vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

OGImagen is an AI-powered generator that creates and delivers optimized Open Graph, Twitter, and LinkedIn images with ready-to-use meta tags.

Last updated: March 11, 2026

OpenMark AI benchmarks 100+ LLMs on your task: cost, speed, quality & stability. Browser-based; no provider API keys for hosted runs.

Visual Comparison

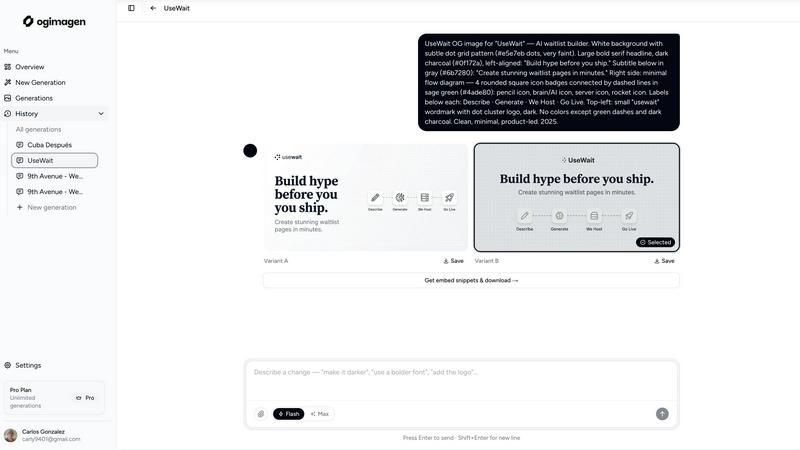

OGimagen

OpenMark AI

Overview

About OGimagen

OGimagen is an AI-powered platform engineered to automate and optimize the creation of Open Graph (OG) images and social media cards. It addresses the technical and design challenges developers and content creators face when generating platform-specific preview images for shared links. The core value proposition lies in its ability to transform simple text inputs—like a title and description—into production-ready, visually compelling social cards within seconds. The tool is built for developers, marketers, and website owners who require professional-looking link previews for platforms like Facebook, X (Twitter), and LinkedIn without needing design software or manual image editing. OGimagen ensures technical compliance by generating images in the exact pixel dimensions required by each major platform (1200x630 for OG, 1200x600 for Twitter, 1200x627 for LinkedIn) and delivers them via a global Cloudflare CDN for fast, reliable delivery. Furthermore, it streamlines the implementation workflow by providing ready-to-paste meta tag snippets for all major web frameworks, including Next.js, Astro, and SvelteKit. This end-to-end solution significantly improves click-through rates by guaranteeing that shared links display engaging, on-brand preview imagery with minimal effort and zero design skills required.

About OpenMark AI

OpenMark AI is a web application for task-level LLM benchmarking. You describe what you want to test in plain language, run the same prompts against many models in one session, and compare cost per request, latency, scored quality, and stability across repeat runs, so you see variance, not a single lucky output.

The product is built for developers and product teams who need to choose or validate a model before shipping an AI feature. Hosted benchmarking uses credits, so you do not need to configure separate OpenAI, Anthropic, or Google API keys for every comparison.

You get side-by-side results with real API calls to models, not cached marketing numbers. Use it when you care about cost efficiency (quality relative to what you pay), not just the cheapest token price on a datasheet.

OpenMark AI supports a large catalog of models and focuses on pre-deployment decisions: which model fits this workflow, at what cost, and whether outputs are consistent when you run the same task again. Free and paid plans are available; details are shown in the in-app billing section.