Agenta vs Fallom

Side-by-side comparison to help you choose the right tool.

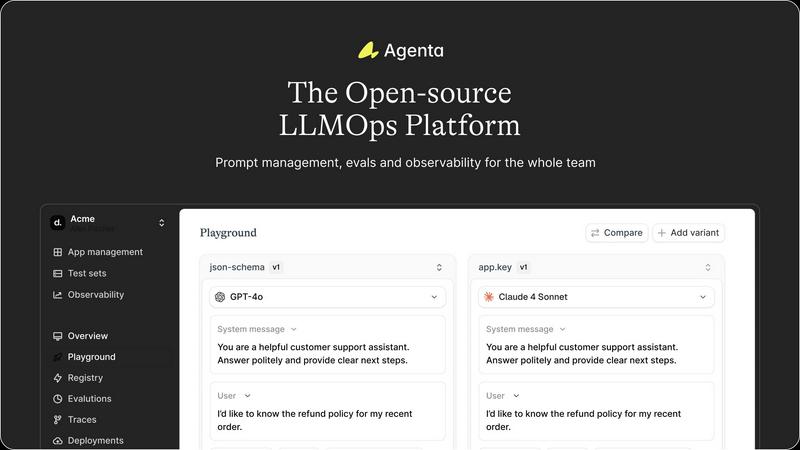

Agenta is an open-source LLMOps platform that centralizes prompt management, evaluation, and observability for reliable.

Last updated: March 1, 2026

Fallom is an AI-native observability platform for real-time tracing and cost tracking of LLMs and agents, ensuring.

Last updated: February 28, 2026

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Unified Experimentation Playground

Agenta offers a unified playground that allows teams to iterate on prompts collaboratively. Users can compare different prompts and models side-by-side, ensuring that all team members are aligned in their experimentation efforts. This feature eliminates the chaos of scattered experiments, providing a structured environment for innovation.

Systematic Automated Evaluation

With Agenta, teams can replace guesswork with a systematic evaluation process. Automated evaluations enable users to run experiments, track results, and validate changes in an organized manner. This feature also allows integration with various evaluators, including LLM-as-a-judge, ensuring flexibility in evaluating LLM performance.

Comprehensive Production Observability

Agenta provides real-time observability for production systems, allowing teams to monitor performance and detect regressions. By tracing every request, users can pinpoint failure points with precision. This feature enhances debugging capabilities, enabling teams to swiftly identify and resolve issues.

Collaborative Workflow Integration

The platform fosters collaboration among product managers, developers, and domain experts by providing a user-friendly interface for prompt editing and experimentation. This feature empowers all team members to contribute to the evaluation process and compare experiments without needing extensive technical skills, promoting a more integrated workflow.

Fallom

Real-time Observability

Fallom provides real-time observability for AI agents, allowing users to track tool calls, analyze timing, and debug issues effectively. By visualizing every LLM call, teams can gain insights into performance and usage patterns, ensuring swift identification and resolution of anomalies.

Cost Attribution

The platform offers detailed cost attribution features that track spending per model, user, and team. This transparency aids in budgeting and chargeback processes, enabling organizations to understand their operational costs clearly and allocate resources efficiently.

Compliance and Audit Trails

Fallom is equipped with comprehensive audit trails to support regulatory compliance requirements. With features like input/output logging, model versioning, and user consent tracking, it ensures that organizations can meet stringent standards such as the EU AI Act and GDPR with confidence.

Session Tracking

Fallom allows users to group traces by session, user, or customer, providing complete context for each interaction. This feature enhances the ability to analyze workflows and improves the debugging process by maintaining a clear record of user activities and associated costs.

Use Cases

Agenta

Collaborative LLM Development

Agenta is ideal for teams engaged in collaborative LLM development. By centralizing prompt management and evaluation, it allows developers, product managers, and domain experts to work together seamlessly, enhancing productivity and reducing bottlenecks.

Automated Testing and Validation

Teams can leverage Agenta to automate the testing and validation of their LLM applications. By systematically evaluating changes and tracking results, organizations can ensure that their models perform as expected, leading to higher reliability in production environments.

Debugging and Trace Analysis

Agenta's comprehensive observability features enable teams to conduct in-depth debugging and trace analysis. By following each request and annotating traces, users can gather valuable insights into system performance and user feedback, facilitating continuous improvement.

Rapid Iteration for Product Launches

The platform supports rapid iteration cycles, making it suitable for organizations looking to fast-track their LLM applications to production. By utilizing Agenta's unified experimentation playground, teams can validate their models more quickly, ensuring timely launches without sacrificing quality.

Fallom

Debugging AI Workflows

Organizations can utilize Fallom to debug complex agentic workflows by tracing each step of LLM interactions. This capability allows teams to identify bottlenecks or failures in real-time, facilitating quicker resolutions and improving overall system performance.

Cost Management

Fallom’s cost attribution features enable organizations to manage and analyze their AI-related expenses effectively. By tracking costs at granular levels, teams can make informed decisions regarding resource allocation and budget adjustments, enhancing financial oversight.

Compliance Audits

For companies operating under strict regulatory frameworks, Fallom provides the necessary tools to maintain compliance. Its comprehensive audit trails and logging capabilities support organizations in preparing for audits and ensuring adherence to laws such as GDPR and SOC 2.

Performance Monitoring

Fallom allows organizations to monitor LLM performance continuously, spotting anomalies before they escalate into significant issues. With real-time dashboards and detailed latency metrics, teams can maintain optimal performance levels for their AI systems.

Overview

About Agenta

Agenta is an open-source LLMOps platform specifically designed to address the challenges faced by AI development teams in building reliable Large Language Model (LLM) applications. It provides the necessary infrastructure to facilitate the entire lifecycle of LLM development, from inception to deployment. By centralizing key processes such as prompt management, evaluation, and observability into a single, collaborative environment, Agenta helps teams mitigate the unpredictability and fragmented workflows that often plague LLM projects. It is tailored for cross-functional teams, including developers, product managers, and subject matter experts, enabling them to transition from ad-hoc prompt management and "vibe testing" to a structured, evidence-driven approach. The platform's primary value proposition lies in its integration of three critical pillars of LLMOps: a unified experimentation playground, systematic automated evaluation, and comprehensive production observability. Agenta serves as the single source of truth for prompts, tests, and traces, allowing teams to version control experiments, validate changes, and debug issues efficiently using real production data. This significantly reduces time-to-production, empowering teams to deliver robust AI agents swiftly.

About Fallom

Fallom is an advanced AI-native observability platform meticulously designed for monitoring and optimizing Large Language Model (LLM) and AI agent workloads in real-time production environments. It serves the needs of engineering, product, and compliance teams by providing comprehensive visibility into every interaction with LLMs. The platform's core value proposition lies in its ability to deliver end-to-end tracing of AI calls, capturing essential data such as detailed prompts, model outputs, function/tool calls, token usage, latency metrics, and precise cost calculations per call. Built on the OpenTelemetry standard, Fallom ensures a vendor-agnostic approach with an SDK that allows teams to instrument their applications quickly and efficiently. This eliminates the complexities associated with integrating various monitoring tools. As organizations scale their AI-powered features, Fallom enables them to debug intricate workflows promptly, accurately attribute operational costs, and maintain robust audit trails. This capability is particularly crucial for compliance with stringent regulations such as the EU AI Act, SOC 2, and GDPR. By centralizing observability, Fallom transforms AI operations into a transparent, manageable, and cost-controllable aspect of the modern software stack.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to a set of best practices and methodologies designed to manage the lifecycle of Large Language Models. It encompasses processes such as prompt management, evaluation, deployment, and monitoring to ensure the reliability and effectiveness of LLM applications.

How does Agenta support collaboration among teams?

Agenta enhances collaboration by providing a unified platform where developers, product managers, and domain experts can work together on prompt management, evaluations, and debugging. This integration fosters communication and aligns efforts across different roles.

Can Agenta integrate with existing AI frameworks?

Yes, Agenta is designed to seamlessly integrate with popular AI frameworks and models, including LangChain, LlamaIndex, and OpenAI. This flexibility allows teams to utilize their preferred tools without being locked into a specific vendor.

Is Agenta suitable for both small and large teams?

Absolutely. Agenta is designed to accommodate teams of various sizes, from small startups to large enterprises. Its collaborative features and structured processes make it adaptable to different workflows and team dynamics.

Fallom FAQ

What is Fallom?

Fallom is an AI-native observability platform designed to monitor and optimize Large Language Model interactions in production environments, providing real-time visibility into AI workloads.

How does Fallom ensure compliance?

Fallom supports compliance through comprehensive audit trails, logging of input and output, model versioning, and user consent tracking, ensuring organizations can meet regulatory requirements.

Can Fallom be integrated with existing tools?

Yes, Fallom is built on the OpenTelemetry standard and offers a vendor-agnostic SDK that allows for quick integration into existing applications, simplifying the observability process.

What types of organizations benefit from using Fallom?

Engineering, product, and compliance teams within organizations that leverage AI and LLM technologies benefit significantly from Fallom's real-time monitoring and optimization capabilities, especially those needing to adhere to strict compliance standards.

Alternatives

Agenta Alternatives

Agenta is an open-source LLMOps platform designed for the development, evaluation, and debugging of reliable Large Language Model applications. It serves as a comprehensive solution for AI development teams, addressing the inherent challenges of unpredictability and fragmented workflows in LLM development by providing a unified collaborative environment. Users often seek alternatives to Agenta due to various reasons, including pricing structures, specific feature sets, or unique platform needs that may not be fully met by Agenta. When evaluating alternatives, it is essential to consider factors such as the ease of integration with existing workflows, the robustness of the evaluation framework, and the level of support for collaboration among cross-functional teams.

Fallom Alternatives

Fallom is an AI-native observability platform specifically designed for monitoring and optimizing Large Language Model (LLM) and AI agent workloads in production environments. It offers real-time tracing and cost tracking, providing teams with comprehensive visibility into LLM interactions and improving their debugging processes. Users often seek alternatives to Fallom for various reasons, including pricing considerations, the need for specific features, or compatibility with different platforms. When searching for an alternative, it's essential to evaluate the observability capabilities, compliance features, ease of integration, and overall cost-effectiveness to ensure the chosen solution aligns with organizational goals and operational requirements.